Explaining the 8-fields model

Many organizations have invested heavily in training and certification, or more lately as the trends indicate – in serious games and gamification. All in an attempt to change behavior and improve performance. However many organizations, if not the most, fail to get the hoped for value and the sustainable change in behavior following their investment. Particularly in the world of ITSM (IT Service Management) training. For too long now in the world of ITSM and ITIL we have focused on the certificate and not on the impact we want to create using the ITSM theory. The same can equally apply to Project management theory such as Prince2. Why is this?. In this article I want to look at a model which answers two key questions: ‘How we evaluate our training?’, and ‘What is it we want to achieve?’. Logical questions, you would expect that everybody asks before investing in training. However this isn’t always the case, or isn’t always done in an effective way. I will explain this using the 8-field model. Consistently using this 8-field model will help ensure that you gain a maximum return on investment from your training and achieve sustainable, measurable results.

In this article I will explain first how the 8-fields approach works, then I will show a case of how we used it together with a Serious Business game (Apollo 13 – An ITSM Case Experience).

First of all lets look at the question ‘How do we evaluate training?’. I will use Kirkpatrick to look at this. Kirkpatrick mentions 4 levels of evaluations.

In short:

4. Business Results

3. Functioning

2. Learning/proving

1. The learning process

Most training companies and organizations ‘purchasing’ training focus on level 1 and 2. Easy money, just classes full of students (18-20), running an ITIL foundation, go and do the Exam and pass (or not). The training companies would then proudly announce and market “Highest scoring training!”, “Best performing trainers!”, “ Highest pass rate”, or some even ‘Guaranteed pass! keep taking it for free until you get the certificate’! But still the Business Results are not always materializing. 70 – 80% of organizations are NOT getting the hoped for value from these investments.

Many customer organizations have decided to ‘do‘ ITIL and simply purchase an ITIL training and send their staff to these courses without too much thought about ensuring what is learnt actually results in observable, improved behavior and demonstrated performance improvements. Often we simply ‘Hope’ that the theory will be used. Or many people purchase a serious game or apply some kind of gamification to make the learning experience more fun and help people understand how to translate the theory into practice, and then ‘hope’ that people apply the theory into practice back at the workplace.

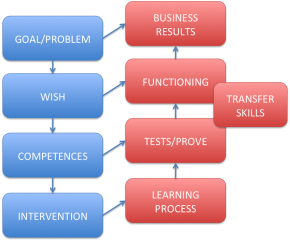

The 8-fields model. This model was designed by Joseph Kessels and Cora Smit (Netherlands). They are both experts in designing and developing Corporate Training. I gained my Master degree in Human Resource Development from Joseph Kessels and he taught me this model which I still use for many purposes.

The 8-fields model

Realization steps

On the right side you recognize the 4 levels of KirkPatrick. I have added one extra box called “Transfer Skills”. I think we can increase the chance that the training will lead to real Business Results if we add the evaluation of transfer skills of the students. “Are they able to translate the Knowledge, evaluated on level 2, to new functioning?”. We must add exercises to our training and our testing to help students transfer the knowledge to their daily work.

Needs/Wish steps

On the left side we see the aspects to identify the learning objectives and learning needs. I will focus on these items in more detail.

Goal/Problem – We need to know the problem we want to solve or the objectives we want to meet with our training intervention. Whether it is traditional classroom training or game based training. The problem must be a real problem to be solved. This is the WHY we need to train. For example:

- The costs for solving incidents are too high, we hire externals for $ 200K a year.

- Projects are over time over budget, this costs us $150K a year

Very often we ask people in our simulation sessions ‘why are you DOING ITIL? what is the problem your are hoping to solve’? In more than 90% of the cases we get blank stares!

Wish – We need to identify WHAT we would like to see following the training. This is a description of the situation or ‘behavior’ we expect to see when the problem is solved. For example:

- We see that employees on our helpdesk are able to solve 60% of the Windows 7 calls themselves.

- We see employees of the second line teams sharing their knowledge about work-arounds so that Helpdesk employees can re-use this knowledge.

- Process managers are able to make a clear business case for process improvement initiatives, linked to Value, Outcomes, Costs and Risks.

- We see project managers who are able to manage their costs using their own calculations and financial instruments.

- We see project managers who can take effective actions if the project is going over time or over budget.

Competences – Now we know WHAT we want to see after the training. The next step is to identify what knowledge, skills and behavior we need to develop during the training. Examples:

- Helpdesk must learn the basics of Windows 7 so they can solve the top 20 incidents.

- Second Line support must learn how to document solutions in the tool so helpdesk can use this knowledge to solve incidents. They must also recognize the need for doing this.

- Process managers must learn how to make a business case for a service improvement initiative.

- Second Line support must learn how to transfer the knowledge to Helpdesk employees so they understand and can re-use the knowledge to solve incidents.

- Project Managers must learn how to use the project management tool.

- Project Managers must learn how to manage issues, how to identify possible risks on time and budget.

Intervention – This is the design of the learning intervention. It is the HOW are we going to learn. This describes the whole process including the interventions. Examples:

- The Helpdesk employees will make a list of the top 20 Windows7 incidents of the last 4-months. This list will be discussed with a training partner and we will ask them to prepare a 1 day training to train the helpdesk employees the basics of Windows7 to be able to solve those top 20 incidents. This action will be repeated every 2 months.

- Second line support will start documenting all solutions of recurring incidents in the tool. They will get coaching from the supplier.

- Process managers will make a business case using facts and figures and relating this to business impact for all improvement initiatives, these will be agreed with managers and a group of business representatives.

- Every Friday afternoon there will be a 2 hour session from one of the second line support engineers to explain how to solve top 5 incidents using the knowledge in the tool. Helpdesk will give feedback.

- There will be training for project managers in using the tool.

- 5 of our project managers will start an AL (Action Learning) project to learn how to manage projects. This will be supported by an AL coach.

Consistency In the Model

The strength of the model is the consistency check between the 8-fields. Let us now focus on some of them:

Each box must be vertically consistent with the box above!

We must check that the things we want to learn in competences really will create the wish-situation.

If we see new functioning (on the right) we must be sure that this will create Business Results.

If not, we must change the description in one of the boxes. This means during design of the learning process we must check, check and double check if this consistency is ok.

Each ‘wish-box’ must be consistent with the same horizontal ‘evaluation-box’

We must test the competence we train using effective tests. In our example a test could look like this: “After the training, the helpdesk students get 10 Windows7 incidents from the top 20 and must be able to solve them.” Or “The second line support engineers must document 10 solutions using the tool, then the Helpdesk employees receive 10 incidents and must solve them using the information”.

This way we can check the consistency. We only test those competences that really make sense.

The training intervention must be efficient and focus on the Problem.

We must check if this design is creative, fun, cheap and effective. It also must have exercises to develop transfer skills. We must be sure this design has all aspects built in to ensure the competences required for the wish situation are developed, to help us solve the problem. If not, then we must redesign the intervention.

How to use the 8-fields model?

It is a powerful model, but how can we create value from it?

During Intake with the customer

When a Customer agrees to use a Business Simulation (Apollo13) we will have an intake with the customer. We can the use the 8-fields model to analyze the training needs of this customer. During this session we will focus on the top 4 boxes.

PROBLEM – BUSINESS RESULTS

WISH – FUNCTIONING

Examples of questions:

- What Problem do you need to solve and what are the Business Results you will see if the Problem is solved?

- How do you see your employees working (Wish) after the Problem is solved?, what is the desirable behaviour we will see?

- How do you see the Transfer of Knowledge? What actions are you going to take to create Business Results? How will the line managers and team managers facilitate people in applying the new knowledge?

- How are you going to measure the Business Results?

- Who will be responsible for supporting the transfer of knowledge?

After this intake session, it is the challenge of the training/consultant to design to lower 4 boxes.

To Design a training intervention

If you must develop a completely new training, then you will use all 8-fields of the model. Step by step you will fill in all boxes and check the consistency.

A full example:

A Dutch library contacted one of our partners with the question “I want to run 2 Apollo 13 Business simulations”. I went there to talk to the IT Manager and the ITSM project manager and put 8 pieces of paper on the wall and we started exploring the 8-fields. Below you will find the results after 2 hours of exploring.

| Problem Start implementing a tool and people do not see the benefits.No customer focus, customers are not satisfied, talking about doing ‘IT’ themselves. No use of Product Services Catalog, reinventing the wheel. (rework, wasted costs) | Business Results We see that everybody is using the (expensive) tool and there are no negative discussions about the ‘why’. We are using the tool in such away that we save money (at least the investment of the tool) and time and deliver better services with less energy.Does the customer satisfaction survey show a 5,6 to 7,0 improvement.By using the PSC we have better relationship with the customers and it is easier to discuss the services and the performance we need to deliver. |

| Wish Everybody will use the tool. We all use the information to steer and to control. We also use the information to solve incidents.Customers like to come to us, we are quick and we are proactive.80% of our work is described in a Product Service Catalog, this way we can offer standard services and customers know what they can expect, and we have our KPI’s. | Functioning We will ask a set of 20 questions directly after the simulation, and we will ask the same questions after 2 months.After the simulation, the 2 team leads will make an action plan with their teams to work on the lessons learned.Managers will focus on the problem for the next 3 months and correct and reflect on ‘the Wish-situation’ |

| Competences Buy-in for the tool, people show the benefits, know how to use, know the value. Re-using information.We know how to describe our standard services. We know how to manage and steer in KPI’s and agreements. | Test/Prove We will reflect on the following items during the simulation. |

- how is the tool used during the simulation, what are the benefits for each of us

- do we have a happy customer, why, or why not?

- How many standard services do we have?

- Are we able to steer and control our KPI’s

Intervention We first create a list of 20 questions we are interested in so that all 30 participants can answer these questions at the start of the simulations. These questions are on level 3 of our model. Just to measure the changes in behavior and attitude (the main issues for success).During the Apollo13 we will focus on:

- customer satisfaction (crew is a demanding customer, just like the real customer).

- using the tool (using flipcharts to document incidents, let Flight Director do his own reporting using the tool, let Incident Manager use the tool).

- focus on Standard Services (using the Request Models in the simulation).

After the simulation:

- the team leaders will make an action plan with their teams, based on the lessons learned.

- there will be a news letter containing the results of the simulation and the action plan.

- during the next 10 meetings, the transfer of lessons learned will be on every meeting agenda.

- the trainer will come back each month (3 times) to discuss the status and possible issues.

Learning Process Did we find this simulation useful? What kind of lessons learned did you capture and which of them can we use in our work? Did you learn how to apply the lessons learned during the simulation? Did you get energy and believe on the outcome so you are able to work on the issues?

The outcome before the simulation

1. 20% saw the need for a tool

2. 30% did not know why the customers were not happy

3. 80% did not know what the value of a Service Catalog was

4. Low customer satisfaction score

5. Poor fisrt call resolution rate

The outcome direct after the simulation

1. 60% of the people saw the benefits of the tool

2. 80% discovered why the customers were not happy with the service

3. 10% did not know what the value of a Service Catalog was

4. Customer satisfaction at the time of the simulation was 6.0

5. The Service desk had little consistent access to proven work-arounds

After one month

- Team leads made their action plans and had their 15 minutes of focus during each meeting.

- None of the employee had negative discussions about the tools.

- Process managers were starting to use the tool for reporting

- Service Desk is starting to use the information from the tool to solve incidents, this was already having an impact on first call resolution capabilities.

- There was a positive atmosphere in the whole team, and they were using the word Apollo at least once a day in a way of reinforcing behaviour and confronting each other with agreements and responsibilities.

- Customer satisfaction has improved with regard to timely resoulution, more pro-active information on status.

Tips and Tricks using the 8-Fields model

- Make sure your interventions combine the 4 learning types, reading, listening, doing and watching.

- Integrate learning and working into your intervention. For example: interview people, take the results to training, work on it and bring it back into work.

- During the training or serious game capture improvement suggestions that need to be taken away and applied.

- Ensure that people attending the training or serious game are aware of the problem to be solved and what is expected from them following the traininfg intervention. Also how the learning is to be transferred into ‘functioning’.

- Integrate Transfer Skills exercises in your training interventions.

- Define the measures and the targets of the 4 levels of Kirkpatrick.

- Monitor on regular basis on all 4 levels, evaluate and improve.

- On level 3 and 4 increase the frequency of monitoring and evaluating and improving, this will bring you closer to real Business Results.

- Make sure you do intakes with the right people: problem owner, business manager, team leader et cetera.